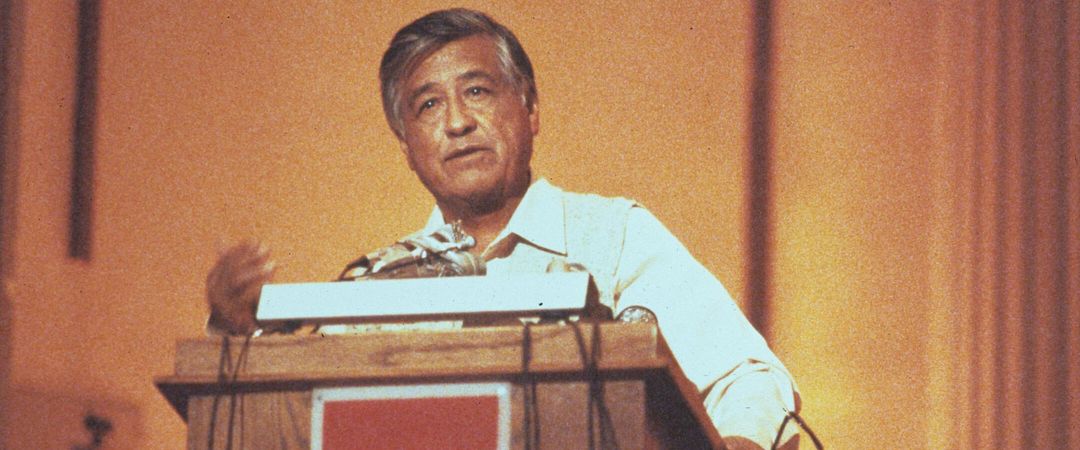

Professor and lead of the UW’s WiNGS Lab Suman Banerjee and his team develop innovative solutions for real-world problems.

Banerjee is the John P. Morgrdige chair of computer sciences at the UW and leads the Wisconsin Wireless and Networking Systems (WiNGS) Lab on campus. He joined the UW in 2003 and helped create the WiNGS Lab in 2005.

Help us ensure the UW remains an affordable, world-class institution and engine of innovation for generations to come.

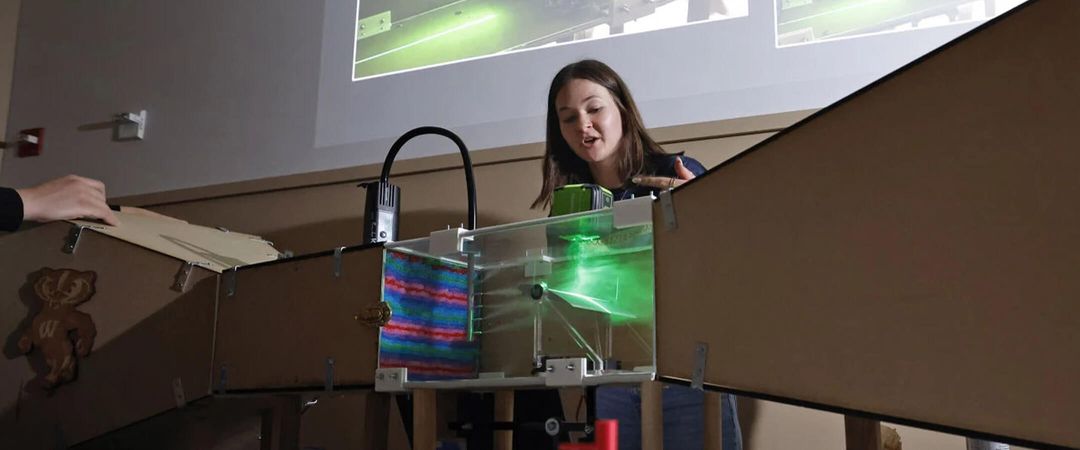

Make a GiftThe lab uses mobile and wireless networking technology to create projects that solve real-world problems, and Banerjee and his team design algorithms, analysis, and functional prototypes. From creating augmented reality headsets that keep firefighters safe to building wearable monitors that allow physical therapists to offer more accessible health care, the WiNGS Lab uses big ideas to create solutions for real problems.

“We do very practical things that can have a real impact,” Banerjee says. “We are always looking for interesting collaborations with people who feel that they have an interesting problem.”

What is unique about the field of computer science, and why did you pursue it?

Computer science, as a discipline, is blessed because we can immediately have a large impact if we do the right things. In the mobile wireless space, we have a very direct path to impact. For example, in our ongoing work, we are trying to build what we call a cognitive AI assistant. Essentially, it is a little wearable with a small camera that is looking at your environment outside. So, what you are seeing, the camera is also seeing, but maybe more accurately. It uses a little earpiece and runs a large language model, but in a very carefully designed manner, and it can give you guidance on what you may not see. It may be useful to, for example, people with visual disabilities. I think if we build it and do it right, we can immediately deploy it. And I think that is an advantage and satisfaction that you can generate immediately. We are blessed that we can have a pretty immediate impact, and I think that’s very uplifting. And the technical challenges, of course, are really interesting.

Your lab also creates applications used in health care. What innovations have you developed for that field?

We realized that there are many applications which can benefit from various wireless technologies in health care. We had a project where we built a wearable watch that can basically track the motion of your arm as you move your hand. One application we focused on a lot was physical therapy. When in physical therapy, you do exercises and you can use the wearable to track your arm position. From that you can estimate whether you’re doing the exercises correctly, whether you’re doing the correct number of repetitions, and what your range of motion is. We enabled physical therapists to do this, through what we call remote therapy, where you don’t have to come to the doctor’s office to do your therapy. You do it at home, and the doctor can observe your activities as you do them.

More recently, there was a project where we showed we can wirelessly estimate a person’s vitals, like heart rate, breathing, breathing rate and breathing characteristics, respiration rate. We built our system for adults, but I’m interested in pushing this further toward other very critical domains of health care, especially in the NICU space. Preterm newborns are incredibly vulnerable, and their skin is very soft. So, if you can [take their vitals] wirelessly and observe them, that makes it very, very powerful.

Are there any other projects your team is working on right now that you are particularly excited about?

We are trying to look at a holistic solution for public safety scenarios — for firefighters and first responders. When first responders go inside a building, they have no idea now what is inside; things may have fallen, there’s fire, the topology may have changed, they don’t know where the victims might be. So, what kind of tools can we give them to make their work a lot more effective?

And we have been working on this project where we leverage an augmented reality headset. Basically, these are wearables mounted on the head. Using wireless signals, there are ways to, in real time, construct maps of what they are observing. It’s called edge computing, which brings computational capabilities closer to end users, not in the cloud. It allows different people with headsets to each share their view of the world with this platform. Then, it reconstructs the map and shares it back. So, we can create and utilize these maps to navigate indoors, detecting objects from the data. And we partnered with firefighters, and I think that engagement really helps drive how we approach the problems and the projects. How can these technologies augment their ability to understand the scene and do the right things?

We are also trying to look at how we can take these technologies and enable first responders when they go into a physical space to detect unconscious victims who cannot call for help. As I mentioned before, we can detect a person’s heart rate. If we can do that, then we know there is a person there, and we can identify them. And these technologies can go through walls. I think there are a lot of interesting problems, and this is exciting for us. We can really do something that can have a potential impact.

When you think about the future, what comes to mind?

I think the landscape is changing very rapidly with the availability of new AI capabilities. While computing capabilities are out there in large data centers where the models can run very easily — if you want to take advantage of all of that in small devices, such as IoT devices, headsets, and wearables, the power budget is constrained. Being able to do what these large language models are promising to do but in a small form factor or a mobile form factor and distributing the workload among multiple devices are some very challenging things that need to be solved to make it lot more ubiquitous.

And as an application domain, our interests are leaning toward health care applications. There is a lot of potential for these technologies to have an impact in that field. And we are trying to work more closely with medical professionals, so we have collaborations with doctors to try to understand where we can have the best impact and how the technology should be refined to meet the challenges they experience.

As someone who finds solutions to real problems, what would you encourage people to be curious about?

There is quite a big gap between what technologies can do and what people can envision for them. When we think about new technologies, there are a lot of exciting opportunities that we are not thinking about because we just don’t know that it’s a possibility. So, when you look at technologies, think about, ‘What does this do in your daily life? What does this do in the work you’re doing? How can that change things? How can this change what I think?’ The imagination has to be there to connect the technology with the future, potential applications.